A Telegram user who advertises their services on Twitter will create an AI-generated pornographic image of anyone in the world for as little as $10 if users send them pictures of that person. Like many other Telegram communities and users producing nonconsensual AI-generated sexual images, this user creates fake nude images of celebrities, including images of minors in swimsuits, but is particularly notable because it plainly and openly shows one of the most severe harms of generative AI tools: easily creating nonconsensual pornography of ordinary people.

This is only going to get easier. The djinn is out of the bottle.

“Djinn”, specifically, being the correct word choice. We’re way past fun-loving blue cartoon Robin Williams genies granting wishes, doing impressions of Jack Nicholson and getting into madcap hijinks. We’re back into fuckin’… shapeshifting cobras woven of fire and dust by the archdevil Iblis, hiding in caves and slithering out into the desert at night to tempt mortal men to sin. That mythologically-accurate shit.

Have you ever seen the wishmaster movies?

Make. Your. Wishes.

Doesn’t mean distribution should be legal.

People are going to do what they’re going to do, and the existence of this isn’t an argument to put spyware on everyone’s computer to catch it or whatever crazy extreme you can take it to.

But distributing nudes of someone without their consent, real or fake, should be treated as the clear sexual harassment it is, and result in meaningful criminal prosecution.

Almost always it makes more sense to ban the action, not the tool. Especially for tools with such generalized use cases.

While I agree in spirit, any law surrounding it would need to be very clearly worded, with certain exceptions carved out. Which I’m sure wouldn’t happen.

I could easily see people thinking something was of them, when in reality it was of someone else.

I’m not familiar with the US laws, but… isn’t it already some form of crime or something to distribute nude of someone without their consent? This should not change whether AI is involved or not.

It might depend on whether fabricating them wholesale would be considered a nude or not. Legally, it could be considered a different person if you’re making it, since the “nude” is someone else, and you’re putting their face on top, or it’s a complete fabrication made by a computer.

Unclear if it would still count if it was someone else and they were lying about it being the victim, for example, pretending a headless mirror-nude was sent by the victim, when it was sent by someone else.

As soon as anyone can do this on their own machine with no third parties involved all laws and other measures being discussed will be moot.

We can punish nonconsensual sharing but that’s about it.

As soon as anyone can do this on their own machine with no third parties involved

We’ve been there for a while now

Some people can, I wouldn’t even know where to start. And is the photo/video generator completely on home machines without any processing being done remotely already?

I’m thinking about a future where simple tools are available where anyone could just drop in a photo or two and get anything up to a VR porn video.

And is the photo/video generator completely on home machines without any processing being done remotely already?

Yes

Well…shit. It seems like any new laws are already too little too late then.

Stable Diffusion has been easily locally installed and runnable on any decent GPU for 2 years at this point.

Combine that with Civitai.com for easy to download and run models of almost anything you can imagine - IP, celebrity, concepts, etc… and the possibilities have been endless.

In fact, with completely free apps like Draw Things on iOS, which allows you to run it on YOUR PHONE locally - where you can download models, tweak, customize, hand it images directly from your mobile device’s library… making this stuff is now trivial on the go.

Tensor processors/AI accelerators have also been a thing on new hardware for a while. Mobile devices have them, Intel/Apple include them with their processors, and it’s not uncommon to find them on newer graphics cards.

That would just make it easier compared to needing quite a powerful computer for that kind of task.

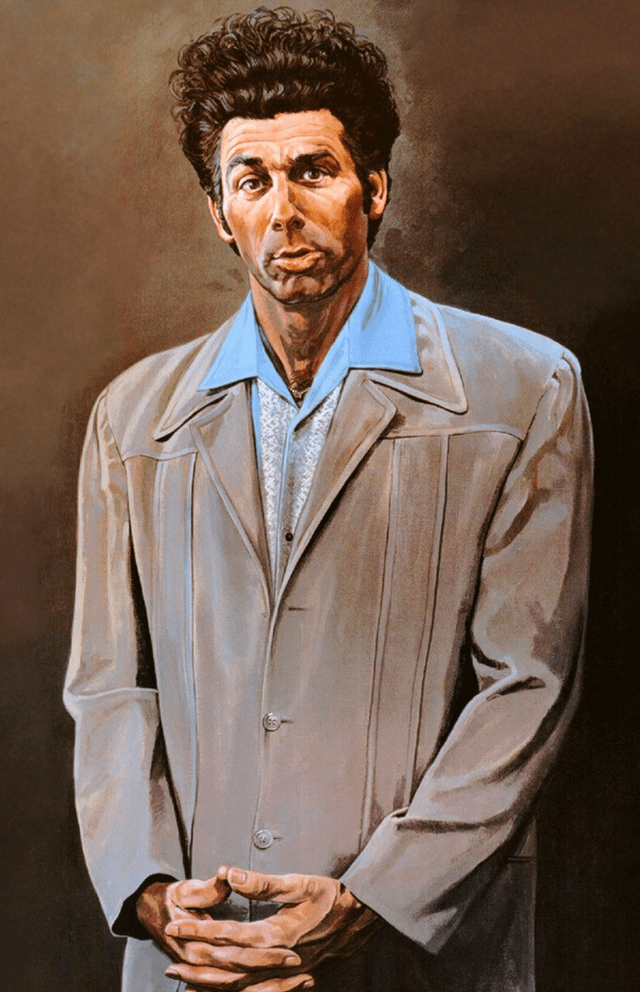

I can paint as many nude images of Rihanna as I want.

You may be sued for damages if you sell those nude paintings of Rihanna at a large enough scale that Rihanna notices

This is not new. People have been Photoshopping this kind of thing since before there was Photoshop. Why “AI” being involved matters is beyond me. The result is the same: fake porn/nudes.

And all the hand wringing in the world about it being non consensual will not stop it. The cat has been out of the bag for a long time.

I think we all need to shift to not believing what we see. It is counterintuitive, but also the new normal.

People have been Photoshopping this kind of thing since before there was Photoshop. Why “AI” being involved matters is beyond me

Because now it’s faster, can be generated in bulk and requires no skill from the person doing it.

I blame electricity. Before computers, people had to learn to paint to do this. We should go back to living like medieval peasants.

Those were the days…

This, but unironically.

As much skill as a 9 year old and a 16 year old can muster?

no skill from the person doing it.

This feels entirely non-sequitur, to the point of damaging any point you’re trying to make. Whether I paint a nude or the modern Leonardi DaVinci paints a nude our rights (and/or the rights of the model, depending on your perspective on this issue) should be no different, despite the enormous chasm that exists between our artistic skill.

A kid at my high school in the early 90s would use a photocopier and would literally cut and paste yearbook headshots onto porn photos. This could also be done in bulk and doesn’t require any skills that a 1st grader doesn’t have.

Those are easily disproven. There’s no way you think that’s the same thing. If you can pull up the source photo and it’s a clear match/copy for the fake it’s easy to disprove. AI can alter the angle, position, and expression on your face in a believable manor making it a lot harder to link the photo to source material

This was before Google was a thing, much less reverse lookup with Google Images. The point I was making is that this kind of thing happened even before Photoshop. Photoshop made it look even more realistic. AI is the next step. And even the current AI abilities are nothing compared to what they are going to be even 6 months from now. Yes, this is a problem, but it has been a problem for a long time and anyone who has wanted to create fake nudes of someone has had the ability to easily do so for at least a generation now. We might be at the point now where if you want to make sure you don’t have fake nudes created of you, then you don’t have images of yourself published. However now that everyone has high quality cameras in their pockets, this won’t 100% protect you.

But now they are photo realistic

And it’s looked as realistic as AI jobs?

Not relevant. Using someone’s picture never ever required consent.

I hate this: “Just accept it women of the world, accept the abuse because it’s the new normal” techbro logic so much. It’s absolutely hateful towards women.

We have legal and justice systems to deal with this. It is not the new normal for me to be able to make porn of your sister, or mother, or daughter. Absolutely fucking abhorrent.

I don’t know why you’re being down voted. Sure, it’s unfortunately been happening for a while, but we’re just supposed to keep quiet about it and let it go?

I’m sorry, putting my face on a naked body that’s not mine is one thing, but I really do fear for the people whose likeness gets used in some degrading/depraved porn and it’s actually believable because it’s AI generated. That is SO much worse/psychologically damaging if they find out about it.

It’s unacceptable.

We have legal and justice systems to deal with this.

For reference, here’s how we’re doing with child porn. Platforms with problems include (copying from my comment two months ago):

Ill adults and poor kids generate and sell CSAM. Common to advertise on IG, sell on TG. Huge problem as that Stanford report shows.

Telegram got right on it (not). Fuckers.

How do you propose to deal with someone doing this on their computer, not posting them online, for their “enjoyment”? Mass global surveillance of all existing devices?

It’s not a matter of willingly accepting it; it’s a matter of looking at what can be done and what can not. Publishing fake porn, defaming people, and other similar actions are already (I hope… I am not a lawyer) illegal. Asking for the technology that exists, is available, will continue to grow, and can be used in a private setting with no witness to somehow “stop” because of a law is at best wishful thinking.

There’s nothing to be done, nor should be done, for anything someone individually creates, for their own individual use, never to see the light of day. Anything else is about one step removed from thought policing - afterall what’s the difference between a personally created, private image and the thoughts on your brain?

The other side of that is, we have to have protection for people who this has or will be used against. Strict laws regarding posting or sharing material. Easy and fast removal of abusive material. Actual enforcement. I know we have these things in place already, but they need to be stronger and more robust. The one absolute truth with generative AI, versus Photoshop etc is that it’s significantly faster and easier, thus there will likely be an uptick in this kind of material, thus the need for re-examining current laws.

Because gay porn is a myth I guess…

And so is straight male-focused porn. We men seemingly are not attractive, other than for perfume ads. It’s unbelievable gender roles are still so strongly coded in 20204. Women must be pretty, men must buy products where women look pretty in ads. Men don’t look pretty and women don’t buy products - they clean the house and care for the kids.

I’m aware of how much I’m extrapolating, but a lot of this is the subtext under “they’ll make porn of your sisters and daughters” but leaving out of the thought train your good looking brother/son, when that’d be just as hurtful for them and yourself.

Or your bad looking brother or the bad looking myself.

Imo people making ai fakes for themselves isn’t the end of the world but the real problem is in distribution and blackmail.

You can get blackmailed no matter your gender and it will happen to both genders.

Sorry if I didn’t position this about men. They are the most important thing to discuss and will be the most impacted here, obviously. We must center men on this subject too.

Pointing out your sexism isn’t saying we should be talking about just men. It you whose here acting all holy while ignoring half of the population.

Yes yes, #alllivesmatter amiirte? We just ignore that 99.999% of the victims will be women, just so we can grandstand about men.

It’s not normal but neither is new: you already could cut and glue your cousin’s photo on a Playboy girl, or Photoshop the hot neighbour on Stallone’s muscle body. Today is just easier.

I don’t care if it’s not new, no one cares about how new it is.

typical morning for me.

I suck at Photoshop and Ive tried many times to get good at it over the years. I was able to train a local stable diffusion model on my and my family’s faces and create numerous images of us in all kinds of situations in 2 nights of work. You can get a snap of someone and have nudes of them tomorrow for super cheap.

I agree there is nothing to be done, but it’s painfully obvious to me that the scale and ease of it that makes it much more concerning.

Also the potential for automation/mass-production. Photoshop work still requires a person to sit down to do the actual photoshop. You can try to script things out, but it’s hardly an easy affair.

By comparison, generative models are much more hands-free. Once you get the basics set up, you can just have it go, and churn things at rates well surpassing what a single human could reasonably do (if you have the computing power for it).

The same reason AR15 rifles are different than muskets

This kind of attitude toward non-consensual actions is what perpetuates them. Fuck that shit.

Scale.

Exactly this. And rather believe cryptographically sighed images by comparing hashes with the one supplied by the owner. Then it’s rather a question of trusting a specific source for a specific kind of content. A news photo of the war in Ukraine by the BBC? Check hash on their site. Their reputation is fini if a false image has been found.

At the same time, that does introduce an additional layer of work. Most people aren’t going to do that just for the extra work that it would involve, in much the same way that people today won’t track down an image back down to the original source, but usually just go by the one that they saw.

Especially for people who aren’t so cryptographically or technologically inclined that they know what a hash is, where to find one, and how to compare it (without just opening them both and checking personally).

Sure but that’s no problem if software would do that automatically for users of big (news) sites. Browsers on desktop and apps on phones.

But I saw it on tee-vee!

The irony of parroting this mindless and empty talking point is probably lost on you.

God, do I really have to start putting the /jk or /s back, for those who don’t get it like you??

Upgraded to “definitely.”

Okay, okay, you won. Happy now? Now go.

Ok thanks

Why “AI” being involved matters is beyond me.

The AI hysteria is real, and clickbait is money.

This is something I can’t quite get through to my wife. She does not like that I dismiss things to some degree when it does not makes sense. We get into these convos where Im like I have serious doubts about this and she is like. Are you saying it did not happen and im like. no. It may have happened but not in quite the way they say or its being portrayed in a certain manner. Im still going to take video and photos for now as being likely true but I generally want to see it from independent sources. like different folks with their phones along with cctv of some kind and such.

Ok so pay the dude $10 to put your wife’s head on someone agreeing with you. Problem solved.

I didn’t expect to get a laugh out of reading this discussion, thanks.

lol. there you go. hey you cheated on me. its in this news article right here.

To people who aren’t sure if this should be illegal or what the big deal is: according to Harvard clinical psychiatrist and instructor Dr. Alok Kanojia (aka Dr. K from HealthyGamerGG), once non-consensual pornography (which deepfakes are classified as) is made public over half of people involved will have the urge to kill themselves. There’s also extreme risk of feeling depressed, angry, anxiety, etc. The analogy given is it’s like watching video the next day of yourself undergoing sex without consent as if you’d been drugged.

I’ll admit I used to look at celeb deepfakes, but once I saw that video I stopped immediately and avoid it as much as I possibly can. I believe porn can be done correctly with participant protection and respect. Regarding deepfakes/revenge porn though that statistic about suicidal ideation puts it outside of healthy or ethical. Obviously I can’t make that decision for others or purge the internet, but the fact that there’s such regular and extreme harm for the (what I now know are) victims of non-consensual porn makes it personally immoral. Not because of religion or society but because I want my entertainment to be at minimum consensual and hopefully fun and exciting, not killing people or ruining their happiness.

I get that people say this is the new normal, but it’s already resulted in trauma and will almost certainly continue to do so. Maybe even get worse as the deepfakes get more realistic.

once non-consensual pornography (which deepfakes are classified as) is made public over half of people involved will have the urge to kill themselves.

Not saying that they are justified or anything but wouldn’t people stop caring about them when they reach a critical mass? I mean if everyone could make fakes like these, I think people would care less since they can just dismiss them as fakes.

The analogy given is it’s like watching video the next day of yourself undergoing sex without consent as if you’d been drugged.

You want a world where people just desensitise themselves to things that make them want to die through repeated exposure. I think you’ll get a whole lot of complex PTSD instead.

People used to think their lives are over if they were caught alone with someone of the opposite sex they’re not married to. That is no longer the case in western countries due to normalisation.

The thing that makes them want to die is societal pressure, not the act itself. In this case, if societal pressure from having fake nudes of yourself spread is removed, most of the harm done to people should be neutralised.

Agreed.

"I’ve been in HR since '95, so yeah, I’m old, lol. Noticed a shift in how we view old social media posts? Those wild nights you don’t remember but got posted? If they’re at least a decade old, they’re not as big a deal now. But if it was super illegal, immoral, or harmful, you’re still in trouble.

As for nudes, they can be both the problem and the solution.

To sum it up, like in the animate movie ‘The Incredibles’: ‘If everyone’s special, then no one is.’ If no image can be trusted, no excuse can be doubted. ‘It wasn’t me’ becomes the go-to, and nobody needs to feel ashamed or suicidal over something fake that happens to many.

Of course, this is oversimplifying things in the real world but society will adjust. People won’t kill themselves over this. It might even be a good thing for those on the cusp of AI and improper real world behaviours - ‘Its not me. Its clearly AI, I would never behave so outrageously’.

The thing that makes them want to die is societal pressure, not the act itself.

That’s an assumption that you have no evidence for. You are deciding what feelings people should have by your own personal rules and completely ignoring the people who are saying this is a violation. What gives you the right to tell people how they are allowed to feel?

I think this is realistically the only way forward. To delegitimize any kind of nudes that might show up of a person. Which could be good. But I have no doubt that highschools will be flooded with bullies sending porn around of innocent victims. As much as we delegitimize it as a society, it’ll still have an effect. Like social media, though it’s normal for anyone to reach you at any time, It still makes cyber bullying more hurtful.

Well if you are sending nudes to someone in high school you are sending porn to a minor. Which I am pretty confident is illegal already. I just would rather not search for that law.

I’m wondering if this may already be illegal in some countries. Revenge porn laws now exist in some countries, and I’m not sure if the legislation specifies how the material should be produced to qualify. And if the image is based on a minor, that’s often going to be illegal too - some places I hear even pornographic cartoons are illegal if they feature minors. In my mind people who do this shit are doing something pretty similar to putting hidden cameras in bathrooms.

Here is an alternative Piped link(s):

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

The technology will become available everywhere and run on every device over time. Nothing will stop this

That’s a ripoff. It costs them at most $0.1 to do simple stable diffusion img2img. And most people could do it themselves, they’re purposefully exploiting people who aren’t tech savvy.

I have no sympathy for the people who are being scammed here, I hope they lose hundreds to it. Making fake porn of somebody else without their consent, particularly that which could be mistaken for real if it were to be seen by others, is awful.

I wish everyone involved in this use of AI a very awful day.

Imagine hiring a hit man and then realizing he hired another hit man at half the price. I think the government should compensate them.

Nested hit man scalpers taking advantage of overpaying client.

it’s a “I don’t know tech” tax

The people being exploited are the ones who are the victims of this, not people who paid for it.

There are many victims, including the perpetrators.

It seems like there’s a news story every month or two about a kid who kills themselves because videos of them are circulating. Or they’re being blackmailed.

I have a really hard time thinking of the people who spend ten bucks making deep fakes of other people as victims.

I have a really hard time thinking

Your lack of imagination doesn’t make the plight of non-consensual AI-generated porn artists any less tragic.

deleted by creator

Writing /s would have implied that my fellow lemurs don’t get jokes, and I give them more credit than that.

deleted by creator

Some people just don’t have a sense of humor.

And those people are YOU!!

Thanks for the finger-wagging, you moralistic rapist!

My sarcasm detector is between 8.5-9.5 outta ten.

Missed it this time, FWIW!

No one’s a victim no one’s being exploited. Same as taping a head on a porno mag.

That’s like 80% of the IT industry.

IDK, $10 seems pretty reasonable to run a script for someone who doesn’t want to. A lot of people have that type of arrangement for a job…

That said, I would absolutely never do this for someone, I’m not making nudes of a real person.

Scam is another thing. Fuck these people selling.

But fuck dude they aren’t taking advantage of anyone buying the service. That’s not how the fucking world works. It turns out that even you have money you can post for people to do shit like clean your house or do an oil change.

NOBODY on that side of the equation are bring exploited 🤣

In my experience with SD, getting images that aren’t obviously “wrong” in some way takes multiple iterations with quite some time spent tuning prompts and parameters.

Wait? This is a tool built into stable diffusion?

In regards to people doing it themselves, it might be a bit too technical for some people to setup. But I’ve never tried stable diffusion.

It’s not like deep fake pornography is “built in” but Stable Diffusion can take existing images and generate stuff based on it. That’s kinda how it works really. The de-facto standard UI makes it pretty simple, even for someone who’s not too tech savvy: https://github.com/AUTOMATIC1111/stable-diffusion-webui

Img2img isn’t always spot-on with what you want it to do, though. I was making extra pictures for my kid’s bedtime books that we made together and it was really hit or miss. I’ve even goofed around with my own pictures to turn myself into various characters and it doesn’t work out like you want it to much of the time. I can imagine it’s the same when going for porn, where you’d need to do numerous iterations and tweaking over and over to get the right look/facsimile. There are tools/SD plugins like Roop which does make transferring over faces with img2img easier and more reliable, but even then it’s still not perfect. I haven’t messed around with it in several months, so maybe it’s better and easier now.

It depends on the models you use too. There’s specific training models data out there and all you need to do is give it a prompt of “naked” or something and it’s scary good at making something realistic in 2 minutes. But yeah, there is a learning curve at setting everything up.

Thanks for the link, I’ve been running some llm locally, and I have been interested in stable diffusion. I’m not sure I have the specs for it at the moment though.

An iPhone from 2018 can run Stable Diffusion. You can probably run it on your computer. It just might not be very fast.

By the way, if you’re interested in Stable Diffusion and it turns out your computer CAN’T handle it, there are sites that will let you toy around with it for free, like civitai. They host an enormous number of models and many of them work with the site’s built in generation.

Not quite as robust as running it locally, but worth trying out. And much faster than any of the ancient computers I own.

And mechanics exploit people needing brake jobs. What’s your point?

I doubt tbh that this is the most severe harm of generative AI tools lol

Pretty sure we will see fake political candidates that actually garner votes soon here.

The Waldo Moment manifest.

Re-elect Deez Nuts.

Israeli racial recognition program for example

FR is not generative AI, and people need to stop crying about FR being the boogieman. The harm that FR can potentially cause has been covered and surpassed by other forms of monitoring, primarily smartphone and online tracking.

I wholeheartedly disagree on it being surpassed

If someone doesn’t have a phone and doesn’t go online then they can still be tracked by facial recognition. Someone who has never agreed to any Terms and Conditions can still be tracked by facial recognition

I don’t think there’s anything as dubious as facial recognition due to its ability to track almost anyone regardless of involvement with technology

You don’t need to be online or use a digital device to be tracked by your metadata. Your credit card purchases, phone calls, vehicle license plate, and more can all be correlated.

Additionally, saying “just don’t use a phone” is no different than saying “just wear a mask outside your house”. Both are impractical, if not functionally impossible, in modern society

I’m not arguing which is “worse”, only speaking to the reality we live in

I am arguing which is worse. There are people in Palestine who don’t have the internet, don’t have a phone, and don’t have a credit card. How are they being tracked without facial recognition?

I also didn’t say don’t use a phone. I don’t know where you got that

I know what you’re arguing and why you’re arguing it and I’m not arguing against you.

I’m simply adding what I consider to be important context

And again, the things I listed specifically are far from the only ways to track people. Shit, we can identify people using only the interference their bodies create in a wifi signal, or their gait. There are a million ways to piece together enough details to fingerprint someone. Facial recognition doesn’t have a monopoly on that bit of horror

FR is the buzzword boogieman of choice, and the one you are most aware of because people who make money from your clicks and views have shoved it in front of your face. But go ahead and tell me about what the “real threat” is 👍👍👍

I didn’t say “real threat” either. I’m not sure where you’re getting these things I’m not saying

I think facial recognition isn’t as much of a “buzzword” as much as it is just the most prevalent issue that affects the most people. Yes there are other ways to track people, but none that allow you to easily track everybody regardless of their involvement with modern technology other than facial recognition

(Just to be clear I’m not downvoting you)

I’m pretty sure the AI enabled torture nexus is right around the corner.

Porn of Normal People

Why did they feel the need to add that “normal” to the headline?

To differentiate from celebrities.

Because it’s different to somebody going online and finding a stock picture of Taylor Swift

People who have Wikipedia articles have less of an expectation of privacy than normal people

This telegram user has a hard stance on “weirdos”.

You can get 300 tokens in pornx dot ai for $9.99. This guy is ripping people off.

We are acting as if through out history we managed to orient technology so as to to only keep the benefits and eliminate negative effects. while in reality most of the technology we use still comes with both aspects. and it is not gonna be different with AI.

I’d like to share my initial opinion here. “non consential Ai generated nudes” is technically a freedom, no? Like, we can bastardize our president’s, paste peoples photos on devils or other characters, why is Ai nudes where the line is drawn? The internet made photos of trump and putin kissing shirtless.

It’s a far cry from making weird memes to making actual porn. Especially when it’s not easily seen as fake.

I think their point is where is the line and why is the line where it is?

Psychological trauma. Normal people aren’t used to dealing with that and even celebrities seek help for it. Throw in the transition period where this technology is not widely known and you have careers on the line too.

They’re making pornography of women who are not consenting to it when that is an extremely invasive thing to do that has massive social consequences for women and girls. This could (and almost certainly will) be used on kids too right, this can literally be a tool for the production of child pornography.

Even with regards to adults, do you think this will be used exclusively on public figures? Do you think people aren’t taking pictures of their classmates, of their co-workers, of women and girls they personally know and having this done to pictures of them? It’s fucking disgusting, and horrifying. Have you ever heard of the correlation between revenge porn and suicide? People literally end their lives when pornographic material of them is made and spread without their knowledge and consent. It’s terrifyingly invasive and exploitative. It absolutely can and must be illegal to do this.

It absolutely can and must be illegal to do this.

Given that it can be done in a private context and there is absolutely no way to enforce it without looking into random people’s computer unless they post it online publicly, you’re just asking for a new law to reassure people with no effect. That’s useless.

No, I’m saying make it so that you go to prison for taking pictures of someone and making pornography of them without their consent. Pretty straightforward. If you’re found doing it, off to rot in prison with you.

Strange of you to respond to a comment about the fakes being shared in this way…

Do you have the same prescriptions in relation to someone with a stash of CSAM, and if not, why not?

No. Because in one case, someone ran a program on his computer and the output might hurt someone else feelings if they ever find out, and in the other case people/kid were exploited for sexual purpose to begin with and their live torn appart regardless of the diffusion of the stuff?

How is that a hard concept to understand?

What’s hard to understand is why you skipped the question I asked, and answered a different one instead.

The creation of the CSAM is unquestionably far more harmful, but I wasn’t talking about the *creation *- I was talking about the possession. The harm of the creation is already done, and whether or not the material exists after that does nothing to undo that harm.

Again, is your prescription the same as it relates to the possession, not generation of CSAM?

How can you describe your friends, family, co-workers, peers, making and sharing pornography of you, and say that it comes down to hurt feelings??? It’s taking someone’s personhood, their likeness, their autonomy, their privacy, and reducing them down to a sexual act for which they provide no knowledge or consent. And you think this stays private?? Are you kidding me?? Men have literally been caught making snapchat groups dedicated to sharing their partner’s nudes without their consent. You either have no idea what you’re talking about or you are intentionally downplaying the seriousness of what this is. Like I said in my original comment, people contemplate and attempt suicide when pornographic content is made and shared of them without their knowledge and consent. This is an incredibly serious discussion.

It is people like you, yes you specifically, that provide the framework by which the sexual abuse of women is justified.

I agree. For any guy here who doesn’t care imagine one of your “friends” made an AI porn of you where you have a micropenis and erection problems. I doubt you would be over the moon about it. Or if that doesn’t work imagine it was someone you love. Maybe you don’t want your grandma’s face in a porn.

Seems to fall under any other form of legal public humiliation to me, UNLESS it is purported to be true or genuine. I think if there’s a clear AI watermark or artists signature that’s free speech. If not, it falls under Libel - false and defamatory statements or facts, published as truth. Any harmful deep fake released as truth should be prosecuted as Libel or Slander, whether it’s sexual or not.

The internet made photos of trump and putin kissing shirtless.

And is that OK? I mean I get it, free speech, but just because congress can’t stop you from expressing something doesn’t mean you actually should do it. It’s basically bullying.

Imagine you meet someone you really like at a party, they like you too and look you up on a social network… and find galleries of hardcore porn with you as the star. Only you’re not a porn star, those galleries were created by someone who specifically wanted to hurt you.

AI porn without consent is clearly illegal in almost every country in the world, and the ones where it’s not illegal yet it will be illegal soon. The 1st amendment will be a stumbling block, but it’s not an impenetrable wall - congress can pass laws that limit speech in certain edge cases, and this will be one of them.

The internet made photos of trump and putin kissing shirtless.

And is that OK?

I’m going to jump in on this one and say yes - it’s mostly fine.

I look at these things through the lens of the harm they do and the benefits they deliver - consequentialism and act utilitarianism.

The benefits are artistic, comedic and political.

The “harm” is that Putin and or Trump might feel bad, maaaaaaybe enough that they’d kill themselves. All that gets put back up under benefits as far as I’m concerned - they’re both extremely powerful monsters that have done and will continue to do incredible harm.

The real harm is that such works risk normalising this treatment of regular folk, which is genuinely harmful. I think that’s unlikely, but it’s impossible to rule out.

Similarly, the dissemination of the kinds of AI fakes under discussion is a negative because they do serious,measurable harm.

I think that is okay because there was no intent to create pornography there. It is a political statement. As far as I am concerned that falls under free speech. It is completely different from creating nudes of random people/celebrities with the sole purpose of wanking off to it.

Is that different than wanking to clothed photos of the same people?

The difference is that the image is fake but you can’t really see that its fake. Its so easily created using these tools and can be used to harm people.

The issue isn’t that you’re jerking off to it. The issue is it can create fake photos of situations of people that can be incredibly difficult to deny it really happened.

I think the biggest thing with that is trump and Putin live public lives. They live lives scrutinized by media and the public. They bought into those lives, they chose them. Due to that, there are certain things that we push that they wouldn’t necessarily be illegal if we did them to a normal, private citizen, but because your life is already public we turn a bit of a blind eye. And yes, this applies to celebrities, too.

I don’t necessarily think the above is a good thing, I think everyone should be entitled to some privacy, having the same thing done to a normal person living a private life is a MUCH more clear violation of privacy.

Public figures vs private figures. Fair or not a public figure is usually open season. Go ahead and make a comic where Ben Stein rides a horse home to his love nest with Ben Stiller.

Lemme put it this way. Freedom of speech isn’t freedom of consequences. You talk shit, you’re gonna get hit. Is it truly freedom if you’re infringing on someone else’s rights?

Yeah you don’t have the right to prevent people from drawing pictures of you, but you do have the right not to get hit by some guy you’re drawing.

Don’t get me wrong it’s unsettling, but I agree, I don’t see the initial harm. I see it as creating a physical manifestation of someone’s inner thoughts. I can definitely see how it could become or encourage dangerous situations, but that’s like banning alcohol because it could lead to drunk driving or sexual assault.

Innocently drinking alcohol is in NO WAY compared to creating deepfakes of people without consent.

One is an innocent act that has potentially harsh consequences, the other is a disgusting and invasively violating act that has the potential to ruin an innocent persons life.

You don’t even need to pay, some people do it for free on /b/

It’s stuff like this that makes me against copyright laws. To me it is clear and obvious that you own your own image, and it is far less obvious to me that a company can own an image whose creator drew multiple decades ago that everyone can identify. And yet one is protected and the other isn’t.

What the hell do you own if not yourself? How come a corporation has more rights than we do?

This stuff should be defamation, full stop. There would need to be a law specifically saying you can’t sign it away, though.

Get out of the way of progress

It’s gonna suck no matter what once the technology became available. Perhaps in a bunch of generations there will be a massive cultural shift to something less toxic.

May as well drink the poison if I’m gonna be immersed in it. Cheers.

I was really hoping that with the onset of AI people would be more skeptical of content they see online.

This was one of the reasons. I don’t think there’s anything we can do to prevent people from acting like this, but what we can do as a society is adjust to it so that it’s not as harmful. I’m still hoping that the eventual onset of it becoming easily accessible and useable will help people to look at all content much more closely.

We need to shut this whole coomer thing down until we work out wtf is going on in their brains.

Unironically though, anyone who does this should just be locked up

As long as there are simps, there will always be this bullshit. And there will always be simps, because it isn’t illegal to be pathetic.

Come on, prisons are over populated as it is, if that happens then you me and everyone here are fucked.

I’m not a simp so it’s not a problem for me.

I wonder how holodecks handle this…

They send you to therapy because “it’s not healthy to live in a fantasy.”

Don’t be like Lt Reg Barclay

Probably the same types of guardrails chatGPT has when you ask it to tell you how to cook meth or build a dirty bomb

And maybe Data was distributing jailbroken holodeck programs for pervs on the ship