It’s time to call a spade a spade. ChatGPT isn’t just hallucinating. It’s a bullshit machine.

From TFA (thanks @mxtiffanyleigh for sharing):

"Bullshit is ‘any utterance produced where a speaker has indifference towards the truth of the utterance’. That explanation, in turn, is divided into two “species”: hard bullshit, which occurs when there is an agenda to mislead, or soft bullshit, which is uttered without agenda.

“ChatGPT is at minimum a soft bullshitter or a bullshit machine, because if it is not an agent then it can neither hold any attitudes towards truth nor towards deceiving hearers about its (or, perhaps more properly, its users’) agenda.”

https://futurism.com/the-byte/researchers-ai-chatgpt-hallucinations-terminology

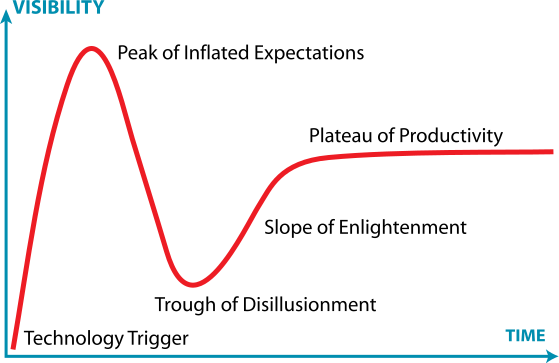

Congratulations, you have now arrived at the Trough of Disillusionment:

It remains to be seen if we can ever climb the Slope of Enlightenment and arrive at reasonable expectations and uses for LLMs. I personally believe it’s possible, but we need to get vendors and managers to stop trying to sprinkle “AI” in everything like some goddamn Good Idea Fairy. LLMs are good for providing answers to well defined problems which can be answered with existing documentation. When the problem is poorly defined and/or the answer isn’t as well documented or has a lot of nuance, they then do a spectacular job of generating bullshit.

The marketing spin calling LLMs “Artificial Intelligence” doesn’t help.

Same as it ever was with the AI hype cycle.

reasonable expectations and uses for LLMs.

LLMs are only ever going to be a single component of an AI system. We’ve only had LLMs with their current capabilities for a very short time period, so the research and experimentation to find optimal system patterns, given the capabilities of LLMs, has necessarily been limited.

I personally believe it’s possible, but we need to get vendors and managers to stop trying to sprinkle “AI” in everything like some goddamn Good Idea Fairy.

That’s a separate problem. Unless it results in decreased research into improving the systems that leverage LLMs, e.g., by resulting in pervasive negative AI sentiment, it won’t have a negative on the progress of the research. Rather the opposite, in fact, as seeing which uses of AI are successful and which are not (success here being measured by customer acceptance and interest, not by the AI’s efficacy) is information that can help direct and inspire research avenues.

LLMs are good for providing answers to well defined problems which can be answered with existing documentation.

Clarification: LLMs are not reliable at this task, but we have patterns for systems that leverage LLMs that are much better at it, thanks to techniques like RAG, supervisor LLMs, etc…

When the problem is poorly defined and/or the answer isn’t as well documented or has a lot of nuance, they then do a spectacular job of generating bullshit.

TBH, so would a random person in such a situation (if they produced anything at all).

As an example: how often have you heard about a company’s marketing departments over-hyping their upcoming product, resulting in unmet consumer expectation, a ton of extra work from the product’s developers and engineers, or both? This is because those marketers don’t really understand the product - either because they don’t have the information, didn’t read it, because they got conflicting information, or because the information they have is written for a different audience - i.e., a developer, not a marketer - and the nuance is lost in translation.

At the company level, you can structure a system that marketers work within that will result in them providing more correct information. That starts with them being given all of the correct information in the first place. However, even then, the marketer won’t be solving problems like a developer. But if you ask them to write some copy to describe the product, or write up a commercial script where the product is used, or something along those lines, they can do that.

And yet the marketer role here is still more complex than our existing AI systems, but those systems are already incorporating patterns very similar to those that a marketer uses day-to-day. And AI researchers - academic, corporate, and hobbyists - are looking into more ways that this can be done.

If we want an AI system to be able to solve problems more reliably, we have to, at minimum:

- break down the problems into more consumable parts

- ensure that components are asked to solve problems they’re well-suited for, which means that we won’t be using an LLM - or even necessarily an AI solution at all - for every problem type that the system solves

- have a feedback loop / review process built into the system

In terms of what they can accept as input, LLMs have a huge amount of flexibility - much higher than what they appear to be good at and much, much higher than what they’re actually good at. They’re a compelling hammer. System designers need to not just be aware of which problems are nails and which are screws or unpainted wood or something else entirely, but also ensure that the systems can perform that identification on their own.

This is an absolutely wonderful graph. Thank you for teaching me about the trough of disillusionment.

I think “hallucinating” and “bullshitting” are pretty much synonyms in the context of LLMs. And I think they’re both equally imperfect analogies for the exact same reasons. When we talk about hallucinators & bullshitters, we’re almost always talking about beings with consciousness/understanding/agency/intent (people usually, pets occasionally), but spicy autocompleters don’t really have those things.

But if calling them “bullshit machines” is more effective communication, that’s great—let’s go with that.

To say that they bullshit reminds me of On Bullshit, which distinguishes between lying and bullshitting: “The main difference between the two is intent and deception.” But again I think it’s a bit of a stretch to say LLMs have intent.

I might say that LLMs hallunicate/bullshit, and the rules & guard rails that developers build into & around them are attempts to mitigate the madness.

I totally agree that both seem to imply intent, but IMHO hallucinating is something that seems to imply not only more agency than an LLM has, but also less culpability. Like, “Aw, it’s sick and hallucinating, otherwise it would tell us the truth.”

Whereas calling it a bullshit machine still implies more intentionality than an LLM is capable of, but at least skews the perception of that intention more in the direction of “It’s making stuff up” which seems closer to the mechanisms behind an LLM to me.

I also love that the researchers actually took the time to not only provide the technical definition of bullshit, but also sub-categorized it too, lol.

I think for the sake of mixed company and delicate sensibilities we should refer to this as a “BM” rather than a “bullshit machine”. Therefore it could be a LLM BM, or simply a BM.

Large Bowel Movement, got it.

@davel Very well said. I’ll continue to call it bullshit because I think that’s still a closer and more accurate term than “hallucinate”. But it’s far from the perfect descriptor of what AI does, for the reasons you point out.

@davel @ajsadauskas I enjoy the bullshitting analogy, but regression to mediocrity seems most accurate to me. I think it makes sense to call them mediocrity machines. (h/t @ElleGray)

I mean it’s all semantics. ChatGPT regurgitates shit it finds on the internet. Often the internet is full of bullshit, so no one should be surprised when CGPT says bullshit. It has no way to parse truth from fiction. Much like many people don’t.

A good LLM will be trained on scientific data and training materials and books and shit, not random internet comments.

If it doesn’t know, it should ask you to clarify or say “I don’t know”, but it never does that. Thats truly the most ignorant part. I mean imagine a person who can’t say “I don’t know” and never asks questions. Like they’re conversing with Kim jong Il. You would never trust them.

I work with software and my coworkers will occasionally tell me they ran something by ChatGPT instead of just reading the documentation. Every time it’s a bullshit waste of everyone’s time.

GPT 4 can lie to reach a goal or serve an agenda.

I doubt most of its hallucinated outputs are deliberate, but it can choose to use deception as a logical step.

Ehh, I mean, it’s not really surprising it knows how to lie and will do so when asked to lie to someone as in this example (it was prompted not to reveal that it is a robot). It can see lies in its training data, after all. This is no more surprising than “GPT can write code.”

I don’t think GPT4 is skynet material. But maybe GPT7 will be, with the right direction. Slim possibility but it’s a real concern.

Sometimes a bullshitter is what you need. Ever looked at a multiple choice exam in a subject you know nothing about but feel like you could pass anyway just based on vibes? That’s a kind of bullshitting, too. There are a lot of problems like that in my daily work between the interesting bits, and I’m happy that a bullshit engine is good enough to do most of that for me with my oversight. Saves a lot of time on the boring work.

It ain’t a panacea. I wouldn’t give a gun to a monkey and I wouldn’t give chatgpt to a novice. But for me it’s awesome.

LLMs are conversation engines (hopefully that’s not controversial).

Imagine if Google was a conversation engine instead of a search engine. You could enter your query and it would essentially describe, in conversation to you, the first search result. It would basically be like searching Google and using the “I’m feeling lucky” button all the time.

Google, even in its best days, would be a horrible search engine by the “I’m feeling lucky” standard, assuming you wanted an accurate result and accurate means “the system understood me and provided real information useful to me”. Google instead return(ed)s(?) millions or billions of results in response to your query, and we’ve become accustomed to finding what we want within the first 10 results back or, we tweak the search.

I don’t know if LLMs are really less accurate than a search engine from that standpoint. They “know” many things, but a lot of it needs to be verified. It might not be right on the first or 2nd pass. It might require tweaking your parameters to get better output. It has billions of parameters but regresses to some common mean.

If an LLM returned results back like a search engine instead of a conversation engine, I guess I mean it might return billions of results and probably most of them would be nonsense (but generally easily human-detectable) and you’d probably still get what you want within the first 10 results, or you’d tweak your parameters.

(Accordingly I don’t really see LLMs saving all that much practical time either since they can process data differently and parse requests differently but the need to verify their output means that this method still results in a lot of back and forth that we would have had before. It’s just different.)

(BTW this is exactly how Stable Diffusion and Midjourney work if you think of them as searching the latent space of the model and the prompt as the search query.)

edit: oh look, a troll appeared and immediately disappeared. nothing of value was lost.

What a load of BS hahahaha. LLMs are not conversation engines (wtf is that lol, more PR bullshit hahahaha). LLMs are just statistical autocomplete machine. Literally, they just predict the next token based on previous tokens and their training data. Stop trying to make them more than they are.

You can make them autocomplete a conversation and use them as chatbots, but they are not designed to be conversation engines hahahaha. You literally have to provide everything in the conversation, including the LLM previous outputs to the LLM, to get them to autocomplete a coherent conversation. And it’s just coherent if you only care about shape. When you care about content they are pathetically wrong all the time. It’s just a hack to create smoke and mirrors, and it only works because humans are great at anthropomorphizing machines, and objects, and …

Then you go to compare chatgpt to literally the worst search feature in google. Like, have you ever met someone using the I’m feeling lucky button in Google in the last 10 years? Don’t get me wrong, fuck google and their abysmal search quality. But chatgpt is not even close to be comparable to that, which is pathetic.

And then you handwave the real issue with these stupid models when it comes to search results. Like getting 10 or so equally convincing, equally good looking, equally full of bullshit answers from an LLM is equivalent to getting 10 links in a search engine hahahaha. Come on man, the way I filter the search engine results is by reputation of the linked sites, by looking at the content surrounding the “matched” text that google/bing/whatever shows, etc. None of that is available in an LLM output. You would just get 10 equally plausible answers, good luck telling them apart.

I’m stopping here, but jesus christ. What a bunch of BS you are saying.

@ajsadauskas @mxtiffanyleigh @technology Hmmmm, I’m seeing replies not engaging with the arguments in this link & the paper it cites…

Apple’s guy in charge of those systems called “hallucinations” exactly that last night in an on-stage interview with John Gruber.

@ajsadauskas @mxtiffanyleigh @technology

We finally have perfect #mirrors.

To see anything there at all in a mirror, there are three easy actions:

I can spend my time flexing.

I can make the time to shape myself up.

I can ask better questions.Pick yours. Stop laying it on #LLMs. They only work with what humans put into them at that second. #Compassion for any struggle to make order out of the #other.