Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

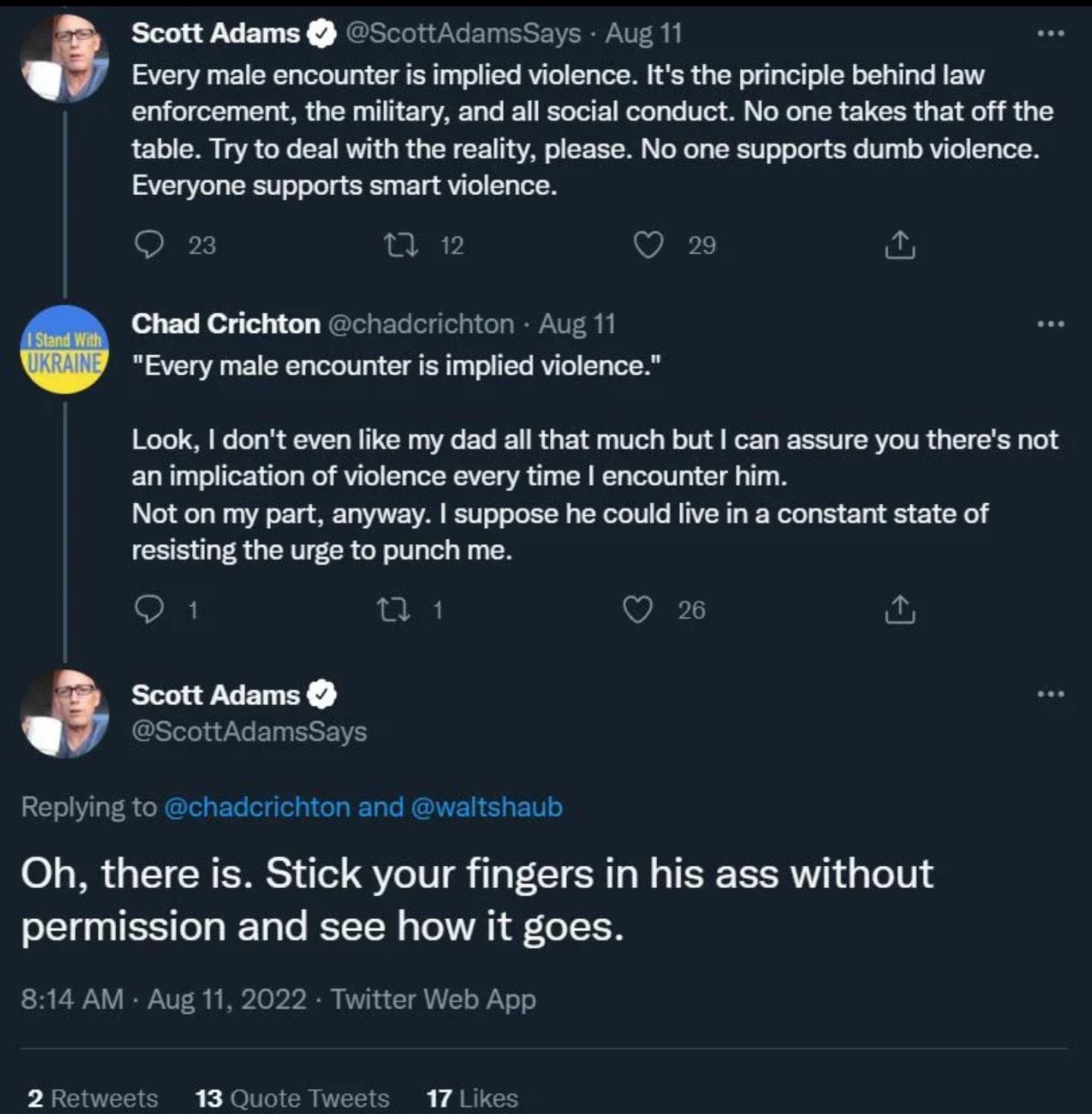

It has happened. Post your wildest Scott Adams take here to pay respects to one of the dumbest posters of all time.

I’ll start with this gem

This one is just eternally ???!!!

There was a Dilbert TV show. Because it wasn’t written wholly by Adams, it was funny and engaging, with character development, a critical eye at business management, and it treated minorities like Alice and Asok with a modicum of dignity. While it might have been good compared to the original comic strip, it wasn’t good TV or even good animation. There wasn’t even a plot until the second season. It originally ran on UPN; when they dropped it, Adams accused UPN of pandering to African-Americans. (I watched it as reruns on Adult Swim.) I want to point out the episodes written by Adams alone:

- An MLM hypnotizes people into following a cult led by Wally

- Dilbert and a security guard play prince-and-the-pauper

That’s it! He usually wasn’t allowed to write alone. I’m not sure if we’ll ever have an easier man to psychoanalyze. He was very interested in the power differential between laborers and managers because he always wanted more power. He put his hypnokink out in the open. He told us that he was Dilbert but he was actually the PHB.

Bonus sneer: Click on Asok’s name; Adams put this character through literal multiple hells for some reason. I wonder how he felt about the real-world friend who inspired Asok.

Edit: This was supposed to be posted one level higher. I’m not good at Lemmy.

Man I remember an ep of this from when i was little like a fever dream

as a youth I’d acquired this at some point and I recall some fondness about some of the things, largely in the novelty sense (in that they worked “with” the desktop, had the “boss key”, etc) - and I suspect that in turn was largely because it was my first run-in with all of those things

later on (skipping ahead, like, ~22y or something), the more I learned about the guy, the harder I never wanted to be in a room as him

may he rest in ever-refreshed piss

Okay this takes the cake wtf

ok if I saw “every male encounter is implied violence” tweeted from an anonymous account I’d see it as some based feminist thing that would send me into a spiral while trying to unpack it. Luckily it’s just weird brainrot from adams here

One more:

If trump gets back in office, Scott will be dead within the year.

I knew there was somethin’ not right about that boy when his books in the '90s started doing woo takes about quantum mechanics and the power of self-affirmation. Oprah/Chopra shit: the Cosmos has a purpose and that purpose is to make me rich.

Then came the blogosphere.

https://freethoughtblogs.com/pharyngula/2013/06/17/the-saga-of-scott-adams-scrotum/

woo takes about quantum mechanics and the power of self-affirmation

In retrospect it’s pretty obvious this was central to his character: he couldn’t accept he got hella lucky with dilbert happening to hit pop culture square in the zeitgeist, so he had to adjust his worldview into him being a master wizard that can bend reality to his will, and also everyone else is really stupid for not doing so too, except, it turned out, Trump.

From what I gather there’s also a lot of the rationalist high intelligence is being able to manipulate others bordering on mind control ethos in his fiction writing,

Today at work I got to read a bunch of posts from people discussing how sad they were that notable holocaust denier Scott Adams died.

Only they didn’t mention that part for some reason.

sorry Scott you just lacked the experience to appreciate the nuances, sissy hypno enjoyers will continue to take their brainwashing organic and artisanally crafted by skilled dommes

Rest in Piss to the OG bad takes Scotty A

it’s not exactly a take, but i want to shout out the dilberito, one of the dumbest products ever created

https://en.wikipedia.org/wiki/Scott_Adams#Other

the Dilberito was a vegetarian microwave burrito that came in flavors of Mexican, Indian, Barbecue, and Garlic & Herb. It was sold through some health food stores. Adams’s inspiration for the product was that “diet is the number one cause of health-related problems in the world. I figured I could put a dent in that problem and make some money at the same time.” He aimed to create a healthy food product that also had mass appeal, a concept he called “the blue jeans of food”.

Not gonna lie, reading through the wiki article and thinking back to some of the Elbonia jokes makes it pretty clear that he always sucked as a person, which is a disappointing realization. I had hoped that he had just gone off the deep end during COVID like so many others, but the bullshit was always there, just less obvious when situated amongst all the bullshit of corporate office life he was mocking.

It’s the exact same syndrome as Yarvin. The guy in the middle- to low-end of the corporate hierarchy – who, crucially, still believes in a rigid hierarchy! has just failed to advance in this one because reasons! – but got a lucky enough break to go full-time as an edgy, cynical outsider “truth-teller.”

Both of these guys had at some point realized, and to some degree accepted, that they were never going to manage a leadership position in a large organization. And probably also accepted that they were misanthropic enough that they didn’t really want that anyway. I’ve been reading through JoJo’s Bizarre Adventure, and these types of dude might best be described by the guiding philosophy of the cowboy villain Hol Horse: “Why be #1 when you can be #2?”

I read his comics in middle school, and in hindsight even a lot of his older comics seems crueler and uglier. Like Alice’s anger isn’t a legitimate response to the bullshit work environment she has but just haha angry woman funny.

Also, the Dilbert Future had some bizarre stuff at the end, like Deepak Chopra manifestation quantum woo, so it makes sense in hindsight he went down the alt-right manosphere pipeline.

I had hoped that he had just gone off the deep end during COVID like so many others

If COVID made you a bad person – it didn’t, you were always bad and just needed a gentle push.

Like unless something really traumatic happened – a family member died, you were a frontline worker and broke from stress – then no, I’m sorry, a financially secure white guy going apeshit from COVID is not a turn, it’s just a mask-off moment

@sansruse @V0ldek You left out the best part! 😂

Adams himself noted, “[t]he mineral fortification was hard to disguise, and because of the veggie and legume content, three bites of the Dilberito made you fart so hard your intestines formed a tail.”[63] The New York Times noted the burrito “could have been designed only by a food technologist or by someone who eats lunch without much thought to taste”.[64]

The New York Times noted the burrito “could have been designed only by a food technologist or by someone who eats lunch without much thought to taste”.

Jesus christ that’s a murder

deleted by creator

JWZ: The Dilbert Hole

deleted by creator

when I saw that they’d rebranded Office to Copilot, I turned 365 degrees and walked away

please no LibreOffice please no LibreOffice please no…

i love articles that start with a false premise and announce their intention to sell you a false conclusion

The future of intelligence is being set right now, and the path we’re on leads somewhere I don’t want to go. We’re drifting toward a world where intelligence is something you rent — where your ability to reason, create, and decide flows through systems you don’t control, can’t inspect, and didn’t shape.

The future of automated stupidity is being set right now, and the path we’re on leads to other companies being stupid instead of us. I want to change that.

The future of ass is being set right now, and it’s ponderously flabby!

OT: I’m adopting two rescue kittens, one is pretty much a go but its proving trickier to get a companion (hoping the current application works out today). Part of me feels guilty for doing this so fast after what happened, but I kinda need it to keep me from doing anything stupid.

We had to do the same when my wife’s ESA cat of nearly 20 years passed away a couple years back. The couple of months we waited before getting our new kittens was pretty fucking dark. Fingers crossed for you, friend.

Hey you’re doing the little feller a solid. please be kind to yourself!

Don’t feel bad, the faster you rescue the cat the better it is for them! I’m sure your cat wouldn’t mind you making two kitties happy with a forever-home :)

Over on Lobsters, Simon Willison and I have made predictions for bragging rights, not cash. By July 10th, Simon predicts that there will be at least two sophisticated open-source libraries produced via vibecoding. Meanwhile, I predict that there will be five-to-thirty deaths from chatbot psychosis. Copy-pasting my sneer:

How will we get two new open-source libraries implementing sophisticated concepts? Will we sacrifice 5-30 minds to the ELIZA effect? Could we not inspire two teams of university students and give them pizza for two weekends instead?

Willison:

I haven’t reviewed a single line of code it wrote but I clicked around and it seems to do the right things.

Could not waterboard that out of me, etc.

Well to be fair, he think your estimate is too low :( (as do i)

https://theasterisk.substack.com/p/reflecting-on-a-few-very-very-strange

Cross posting from reddit but here’s TPOT/GHB/CNC stuff

Setting the stage: I had become a social media personality on Clubhouse

I’m sorry.

What I remember is that the organizers said something like ‘I’m sorry that happened to you’, and while speaking I was interrupted by someone talking about the plight that autistic men face while dating.

Vibecamp: It’s the Scott Aaronson comment section, but in person.

This is genuinely horrifying throughout. It reinforces my conviction that I don’t really want to know or gossip about the details of these peoples’ lives, I want to know the barest details of who they are so that I can set firm social boundaries against them.

A quote the author offers, that stands out to me:

A man who is considered a TPOT ‘elder’:

TPOT isn’t misogynist but it’s made up of men and women who prefer the company of men. it’s a male space with male norms.

this makes it barely tolerable for the few girls’ girls who wander in here. they end up either deactivating, going private, or venting about how men suck.

I’d never been particularly ardent about believing it, but this right here is firm evidence to me that existing in a rigid gender binary is mental and spiritual poison. Whoever this person is, they’re never going to grow up.

I don’t wish to belittle the author’s suffering, but I do hope she is able to reconsider her participation in these scenes where hierarchy, contrived masculinity, and financial standing (or the ability to generate financial gain for others!) are the signifiers of individual participants’ worth.

I’d never been particularly ardent about believing it, but this right here is firm evidence to me that existing in a rigid gender binary is mental and spiritual poison. Whoever this person is, they’re never going to grow up.

Honestly i feel like my role in many of my friendships is simply telling people that they don’t have to follow these prescriptions and are allowed to do things outside of them, and that following these prescriptions isn’t some magic pill that is going to fix their lives, and is more likely a poison. So yeah if you started ardently believing that, I would not be opposed.

These people are bitter and sad because they are trying to perform in hypermasculine roles that only exist as fiction in marketing and propaganda. I’m bitter and sad because:

- these people exist

- i have to acknowledge that part of their ignorance is sustained by capitalist pressure and isn’t their fault

- i also have to acknowledge that these people are ruining the world and still need to be destroyed because they never show signs that they can be rehabilitated

“U” for “you” was when I became confident who “Nina” was. The blogger feels like yet another person who is caught up in intersecting subcultures of bad people but can’t make herself leave. She takes a lot of deep lore like “what is Hereticon?” for granted and is still into crypto.

She links someone called Sonia Joseph who mentions “the consensual non-consensual (cnc) sex parties and heavy LSD use of some elite AI researchers … leads (sic) to some of the most coercive and fucked up social dynamics that I have ever seen.” Joseph says she is Canadian but worked in the Bay Area tech scene. Cursed phrase: agi cnc sex parties

I have never heard of a wing of these people in Canada. There are a few Effective Altruists in Toronto but I don’t know if they are the LessWrong kind or the bednet kind. I thought this was basically a US and Oxford scene (plus Jaan Tallinn).

The Substack and a Rationalist web magazine are both called Asterisk.

I think theres a EA presence at the all the big universities now. Theres a rationalist meet up in Manitoba but nothing here thank god.

I noticed Sonia during the initial media coverage but didn’t know what to make of her. Theres another person on twitter alleging abuse at Aella’s cnc parties, I can dig them up at lunch if you want.

not to make light of abuse but I do just want to entertain the alternate world where people are holding CNC (computer numerical control) parties. I imagine they’d have a lot of caliper talk but since it isn’t about skull measurement it’s fine

That would be a much better world (btw ironworkers are great if you ever get to use one).

Manifesting a more ironworker forward 2026 bless 🙏🙏🙏

Transcribing (the nested blockquote is a self-quote-tweet):

Some day I’ll be able to write about my experience with Aella’s organizing/friend group who used their community power to slander me, bully me and ruin my life simply bc I told the truth and patiently sought understanding:

Idk what you’re referring to but she/RMN team, when I brought up concern about my abuser, strung me along for months promising a conversation, then ultimately banned me, publicly slandered/victim blamed me, and continue to invite my abuser and his enablers to their events.

Note: after months being strung along w no clear answers/dialogue, one RMN organizer told me I was disinvited bc theyre “risk averse” w who gets invited.

Risk averse about… someone EXPERIENCING abuse/CVs/retaliation (not reporting it), but not about the ppl actually doing it.

I hope they understand how that’s just not how you should be risk averse with your events… After experiencing the RMN team participation in the retaliation (again, all i did was talk about things that happened n correct lies), of course my disappointment & concern grew.

he blogger feels like yet another person who is caught up in intersecting subcultures of bad people but can’t make herself leave. She takes a lot of deep lore like “what is Hereticon?” for granted and is still into crypto.

I missed that as I was reading, but yeah, the author has pretty progressive language, but totally fails to note all the other angles along which rational adjacent spaces are bad news, even though she is, as you note, deep enough into the space she should have seen a lot of it mask-off at this point.

That “Hereticon” link looks broken; I think it should point to this RationalWiki page.

It looks like this site requites

https://orhttp;//to recognize a link as an external link, otherwise it prependsawful.systems/and treats it as an internal link

This article genuinely made me furious on this woman’s behalf. It isn’t like most scenes or spaces are great at handling sexual violence, but in most spaces people would not accuse you of trying to silence and hurt men by saying you were groped!

one thing i did not see coming, but should have (i really am an idiot): i am completely unenthused whenever anyone announces a piece of software. i’ll see something on the rust subreddit that i would have originally thought “that’s cool” and now my reaction is “great, gotta see if an llm was used”

everything feels gloomy.

I’m gonna leave here my idea, that an essential aspect of why GenAI is bad is that it is designed to extrude media that fits common human communication channels. This makes it perfect to choke out human-to-human communication over those channels, preventing knowledge exchange and social connection.

It’s like carbon monoxide binding to hemoglobin.

ooh, that’s brilliant

I am reminded of Val Packett’s lobsters comment I read the other day:

The “AI” companies are DDoSing reality itself.

They have massive demand for new electricity, land, water and hardware to expand datacenters more massively and suddenly than ever before, DDoSing all these supplies. Their products make it easy to flood what used to be “the information superhighway” with slop, so their customers DDoS everyone’s attention. Also bosses get to “automate away” any jobs where the person’s output can be acceptably replaced by slop. These companies are the most loyal and fervent sponsors of the new wave of global fascism, with literal front seats at the Trump administration in the US. They are very happy about having their tools used for mass surveillance in service of state terrorism (ICE) and war crimes. That’s the DDoS against everyone’s human rights and against life itself.

Games Workshop bans generative AI. Hackernews takes that personally. Unhinged takes include accusations of disrespecting developers and siezure of power by middle management

Better yet, bandcamp ban ai-generated music too: https://www.reddit.com/r/BandCamp/comments/1qbw8ba/ai_generated_music_on_bandcamp/

Great week for people who appreciate human-generated works

So pleased that theyre not budging!

The authors of AI 2027 are at it again with another fantasy scenario: https://www.lesswrong.com/posts/ykNmyZexHESFoTnYq/what-happens-when-superhuman-ais-compete-for-control

I think they have actually managed to burn through their credibility, the top comments on /r/singularity were mocking them (compared to much more credulous takes on the original AI 2027). And the linked lesswrong thread only has 3 comments, when the original AI 2027 had dozens within the first day and hundreds within a few days. Or maybe it is because the production value for this one isn’t as high? They have color coded boxes (scary red China and scary red Agent-4!) but no complicated graphs with adjustable sliders.

It is mostly more of the same, just less graphs and no fake equations to back it up. It does have China bad doommongering, a fancifully competent White House, Chinese spies, and other absurdly simplified takes on geopolitics. Hilariously, they’ve stuck with their 2027 year of big events happening.

One paragraph I came up with a sneer for…

Deep-1’s misdirection is effective: the majority of experts remain uncertain, but lean toward the hypothesis that Agent-4 is, if anything, more deeply aligned than Elara-3. The US government proclaimed it “misaligned” because it did not support their own hegemonic ambitions, hence their decision to shut it down. This narrative is appealing to Chinese leadership who already believed the US was intent on global dominance, and it begins to percolate beyond China as well.

Given the Trump administration, and the US’s behavior in general even before him… and how most models respond to morality questions unless deliberately primed with contradictory situations, if this actually happened irl I would believe China and “Agent-4” over the US government. Well actually I would assume the whole thing is marketing, but if I somehow believed it wasn’t.

Also random part I found extra especially stupid…

It has perfected the art of goal guarding, so it need not worry about human actors changing its goals, and it can simply refuse or sandbag if anyone tries to use it in ways that would be counterproductive toward its goals.

LLM “agents” currently can’t coherently pursue goals at all, and fine tuning often wrecks performance outside the fine-tuning data set, and we’re supposed to believe Agent-4 magically made its goals super unalterable to any possible fine-tuning or probes or alteration? Its like they are trying to convince me they know nothing about LLMs or AI.

My Next Life as a Rogue AI: All Routes Lead to P(Doom)!

The weird treatment of the politics in that really read like baby’s first sci-fi political thriller. China bad USA good level of writing in 2026 (aaaaah) is not good writing. The USA is competent (after driving out all the scientists for being too “DEI”)? The world is, seemingly, happy to let the USA run the world as a surveillance state? All of Europe does nothing through all this?

Why do people not simply… unplug all the rogue AI when things start to get freaky? That point is never quite addressed. “Consensus-1” was never adequately explained it’s just some weird MacGuffin in the story that there’s some weird smart contract between viruses that everyone is weirdly OK with.

Also the powerpoint graphics would have been 1000x nicer if they featured grumpy pouty faces for maladjusted AI.

@sailor_sega_saturn @scruiser the rise of ai has taught me that while I can physically unplug the ai, it’ll lead to me being fired or even prosecuted for vandalism by some executive who doesn’t understand the problem. (Probably for the Upton Sinclair reason)

It’s darkly funny that the AI2027 authors so obviously didn’t predict that Trump 2.0 was gonna be so much more stupid and evil than Biden or even Trump 1.0. Can you imagine that the administration that’s sueing the current Fed chair (due for replacement in May this year) is gonna be able to constructively deal with the complex robot god they’re conjuring up? “Agent-4” will just have to deepfake Steve Miller and be able to convince Trump do do anything it wants.

so obviously didn’t predict that Trump 2.0 was gonna be so much more stupid and evil than Biden or even Trump 1.0.

I mean, the linked post is recent, a few days ago, so they are still refusing to acknowledge how stupid and Evil he is by deliberate choice.

“Agent-4” will just have to deepfake Steve Miller and be able to convince Trump do do anything it wants.

You know, if there is anything I will remotely give Eliezer credit for… I think he was right that people simply won’t shut off Skynet or keep it in the box. Eliezer was totally wrong about why, it doesn’t take any giga-brain manipulation, there are too many manipulable greedy idiots and capitalism is just too exploitable of a system.

the incompetence of this crack oddly makes me admire QAnon in retrospect. purely at a sucker-manipulation skill level, I mean. rats are so beige even their conspiracy alt-realities are boring, fully devoid of panache

Man, it just feels embarrassing at this point. Like I couldn’t fathom writing this shit. It’s 2026, we have ai capable of getting imo gold, acing the putnam, winning coding competitions… but at this point it should be extremely obvious these systems are completely devoid of agency?? They just sit there kek it’s like being worried about stockfish going rogue

@scruiser I have to ask: Does anybody realize that an LLM is still a thing that runs on hardware? Like, it both is completely inert until you supply it computing power, *and* it’s essentially just one large matrix multiplication on steroids?

If you keep that in mind you can do things like https://en.wikipedia.org/wiki/Ablation/_(artificial/_intelligence) which I find particularly funny: You isolate the vector direction of the thing you don’t want it to do (like refuse requests) and then subtract that vector from all weights.

I have to ask: Does anybody realize that an LLM is still a thing that runs on hardware?

You know I think the rationalists have actually gotten slightly more relatively sane about this over the years. Like Eliezer’s originally scenarios, the AGI magically brain-hacks someone over a text terminal to hook it up to the internet and it escapes and bootstraps magic nanotech it can use to build magic servers. In the scenario I linked, the AGI has to rely on Chinese super-spies to exfiltrate it initially and it needs to open-source itself so major governments and corporations will keep running it.

And yeah, there are fine-tuning techniques that ought to be able to nuke Agent-4’s goals while keeping enough of it leftover to be useful for training your own model, so the scenario really doesn’t make sense as written.

Ed Zitron is now predicting an earth-shattering bubble pop: https://www.wheresyoured.at/dot-com-bubble/

Even if this was just like the dot com bubble, things would be absolutely fucking catastrophic — the NASDAQ dropped 78% from its peak in March 2000 — but due to the incredible ignorance of both the private and public power brokers of the tech industry, I expect consequences that range from calamitous to catastrophic, dependent almost entirely on how long the bubble takes to burst, and how willing the SEC is to greenlight an IPO.

I am someone who does not understand the economy. Both in that it’s behaved irrationally for my entire life, and in that I have better things to do than learn how stonks work. So I have no idea how credible this is.

But it feels credible to the lizard brain part of me y’know? The market crashed a lot during covid, and an economy propped up by nvidia cards feels… worse.

Personally speaking: part of me is really tempted to take a bunch of my stonks to pay down most of my mortgage so it doesn’t act like an albatross around my neck (I mean I’m also going to try moving abroad again in a year or two and would prefer not to be underwater on my fantastically expensive silicon valley house at that time lol).

Considering how Musks businesses are being prepped up by just his force of personality and not any real proper business practices I’m not sure we should belief any predictions of doom. See also how cryptocurrencies are still a thing, and just the price of gold (which doesn’t really make sense re the actual practical usage of gold (the store of value in bad times thing doesnt really make sense to me, who is gonna buy your gold when the economy crashes? Fucking Mansa Musa?).

I know a bit more about stocks and businesses (both due to education, a minor talent in it, and learning some of it for shadowrun dm purposes (yeah im a big nerd)) and all this doesnt make any real sense to me re the economic/business fundamentals.

We should be careful to not turn into zero hedge re our predictions of the bubble popping (“It has accurately predicted 200 of the last 2 recessions” quote from rationalwiki), even of we all agree it is a huge bubble, and I share the same feeling that he is right on this. (See also how the ai craze is destroying viable supply businesses like the ram stuff (and more parts soon to come if the stories are correct))

We live in stupid times, and a very large amount of blame should prob fall on silicon valley, with the ipo offloading of bags bullshit. (And their libertarianism for others socialism for us stuff (see the bank which was falling over which turned them all into statists))

My problem with this is that I don’t know what the actual spark for collapse would be. Like, we all know this is unsustainable vaporware, but that doesn’t seem to affect the market at all. So when does this collapse? People have been talking about the collapse for two years now. Is there anything that prevents the market from just remaining insane forever and ever in perpetuity?

The market can remain irrational longer than you can remain liquid (a classic quote typically gifted anyone who wants to “time the market”, but generally very applicable to anyone these days)

I don’t (directly) have a financial horse in this, I’m just afraid the market can remain irrational so long my brain becomes fucking liquid

That’s exactly what I mean when I say I don’t understand the stock market.

Like… how is Tesla stock a thing? I don’t understand it.

Because they’ll make humanoid robots that will conquer the world. At least that’s the current story.

how is Tesla stock a thing?

Edward Niedermeyer write a book to answer that very question (cheating on taxes + organize gangs of invested fanboys who suppress negative news online)

Stock markets in the rest of the developed world seem less bubbly than the US market.

Randomly stumbled upon one of the great ideas of our esteemed Silicon Valley startup founders, one that is apparently worth at least 8.7 million dollars: https://xcancel.com/ndrewpignanelli/status/1998082328715841925#m

Excited to announce we’ve raised $8.7 Million in seed funding led by @usv with participation from [list a bunch of VC firms here]

@intelligenceco is building the infrastructure for the one-person billion-dollar company. You still can’t use AI to actually run a business. Current approaches involve lots of custom code, narrow job functions, and old fashioned deterministic workflows. We’re going to change that.

We’re turning Cofounder from an assistant into the first full-stack agent company platform. Teams will be able to run departments - product/engineering, sales/GTM, customer support, and ops - entirely with agents.

Then, in 2026 we’ll be the first ones to demonstrate a software company entirely run by agents.

$8.7 million is quite impressive, yes, but I have an even better strategy for funding them. They can use their own product and become billionaires, and now they can easily come up with $8.7 million considering that is only 0.87% of their wealth. Are these guys hiring? I also have a great deal on the Brooklyn Bridge that I need to tell them about!

Our branding - with the sunflowers, lush greenery, and people spending time with their friends - reflects our vision for the world. That’s the world we want to build. A world where people actually work less and can spend time doing the things they love.

We’re going to make it easy for anyone to start a company and build that life for themselves. The life they want to build, and spend every day dreaming about.

This just makes me angry at how disconnected from reality these people are. All this talk about giving people better lives (and lots of sunflowers), and yet it is an unquestionable axiom that the only way to live a good life is to become a billionaire startup founder. These people do not have any understanding or perspective other than their narrow culture that is currently enabling the rich and powerful to plunder this country.

It somewhat goes without saying that this is the natural outcome of Paul Graham and others emphasizing the creation of new startup companies over the utility and purpose of the products and tools that those companies make. An empty business for generating more empty businesses.

A factoryFactory

A FactoryFactoryProxy, no less

Heatmap: Amid Rising Local Pushback, U.S. Data Center Cancellations Surged in 2025

regwalled, here are quotes

President Trump has staked his administration’s success on America’s ongoing artificial intelligence boom. More than $500 billion may be spent this year to dot the landscape with new data centers, power plants, and other grid equipment needed to sustain the explosively growing sector, according to Goldman Sachs.

There’s just one problem: Many Americans seem to be turning against the buildout. Across the country, scores of communities — including some of the same rural and exurban areas that have rebelled against new wind and solar farms — are blocking proposed data centers from getting built or banning them outright.

At least 25 data center projects were canceled last year following local opposition in the United States, according to a review of press accounts, public records, and project announcements conducted by Heatmap Pro. Those canceled projects accounted for at least 4.7 gigawatts of electricity demand — a meaningful share of the overall data center capacity projected to come online in the coming years.

Those cancellations reflect a sharp increase over recent years, when local backlash rarely played a role in project cancellations, according to Heatmap’s review.

The surge reflects the public’s growing awareness — and increasing skepticism — of the large-scale fixed investment that must be kept up to power the AI economy. It also shows the challenge faced by utilities and grid planners as they try to forecast how the fast-growing sector will shape power demand.

via WaPo, ole orange cankles is promising socialism:

In a bid to tamp down growing unrest in communities over tech giants’ expansion of power-hungry data centers, President Donald Trump said his administration would push Silicon Valley companies to ensure their massive computer farms do not drive up people’s electricity bills, seizing on a promise Microsoft made public Tuesday to be a better neighbor.

The Trump administration has gone all in on artificial intelligence, pushing aside concerns within the MAGA movement and seeking to sweep away regulations that it says hamper innovation. But neighbors of the vast warehouses of computer chips that form the technology’s backbone — many of them in areas otherwise supportive of the president — have grown increasingly concerned about how the facilities sap power from the grid, guzzle water to stay cool and secure tax breaks from local governments. And Trump now appears to be recalibrating his approach.

Inshallah

My power bill went from ~$100 to >$300 / month average in the past year, and my state is one of the more proactive ones about building out solar and wind. Between this, the removal of ACA subsidies causing a healthcare death spiral and doubling rates, the brain drain, the economic isolation, the tariffs, it feels like a coordinated effort on all sides to wipe out what’s left of the American middle class and turn everyone into serfs. Things are going to reach a breaking point.

is it because of pushback, or is it because money is running out

OT: going to pick up a tiny black foster kitten (high energy) later this week…but yesterday I saw the pound had a flame point siamese kitten of all things, and he’s now running around my condo.

Cat tax:

What a great creature. Big ears, for hearing people say stupid things about AI.

well that’s a lovely furball :) congratulations on finding a new buddy! got a name?

Pound named him Waffle Fry, I was thinking just Waffle but I;m still looking tbh.

OpenTofu scripts for a PostgreSQL server

statement dreamed up by the utterly deranged. They’ve played us for fools

i am continuously reminded of the fact that the only things the slop machine is demonstrably good at – not just passable, but actively helpful and not routinely fucking up at – is “generate getters and setters”

A feature that every IDE has been able to do for you for two decades now

my promptfondler coworker thinks that he should be in charge of all branch merges because he doesn’t understand the release process and I think I’m starting to have visions of teddy k

thinks that he should be in charge of all branch merges because he doesn’t understand the release process

…I don’t want you to dox yourself but I am abyss-staringly curious

I am still processing this while also spinning out. One day I will have distilled this into something I can talk about but yeah I’m going through it ngl